Evaluate Your Backup Strategy, Speed Up Recovery, And Save The Day

We all leverage and depend on networks and data for everything. For that reason, those who are responsible for the critical components that power the world know how important a solid backup policy is. They also ideally understand how amazing it is to have redundancies and data diversity. This article will briefly detail the various ways to think about, plan, and build proper backups.

Testing Backups

Before I go into any detail about backup strategies, the most important thing to consider is this: Any backup process that is not tested regularly, regardless of the hardware/software and expense, is absolute garbage. It doesn’t matter how many fail-overs, redundancies, or green check marks on automated reports. It only takes a few minutes per month to test backups and is essentially your most valuable paycheck insurance policy.

Backup Topology

The second most important thing to consider is a well thought out answer to the “all eggs in one basket” challenge when planning or designing a backup strategy. I used to get asked often whether or not I had calculated for various nuclear strike and natural disaster scenarios. My answer used to begin with something along the lines of, “If that happens, running backup recovery is probably the last thing on my mind.” Theatrics aside, the question was still met with technical assurance, as remote off-site backups are, of course, important. Consider though the likelihood of a natural disaster consuming a metro versus using only one backup software solution and having it fail when you need it most. Which is more statistically probable (and common)?

To address the fact that at any time, any single backup method may fail, it makes sense to solve this challenge while also tackling a few of the other priorities such as geo-location, media, type, and frequency of backups. By diversifying the data with this in mind, you are also creating a strategy around recovery speed and complexity/steps required. You’ll often read documents referring to effective backup strategies utilizing a “3-2-1” approach, however many of these often refer to the media itself and the practice of duplicating the same backup data to many locations. These articles also address differences in incremental versus differential backups and so on. While these topics are important, it also makes sense to explore a simple way to approach all of this with even more cards to play when a crisis occurs.

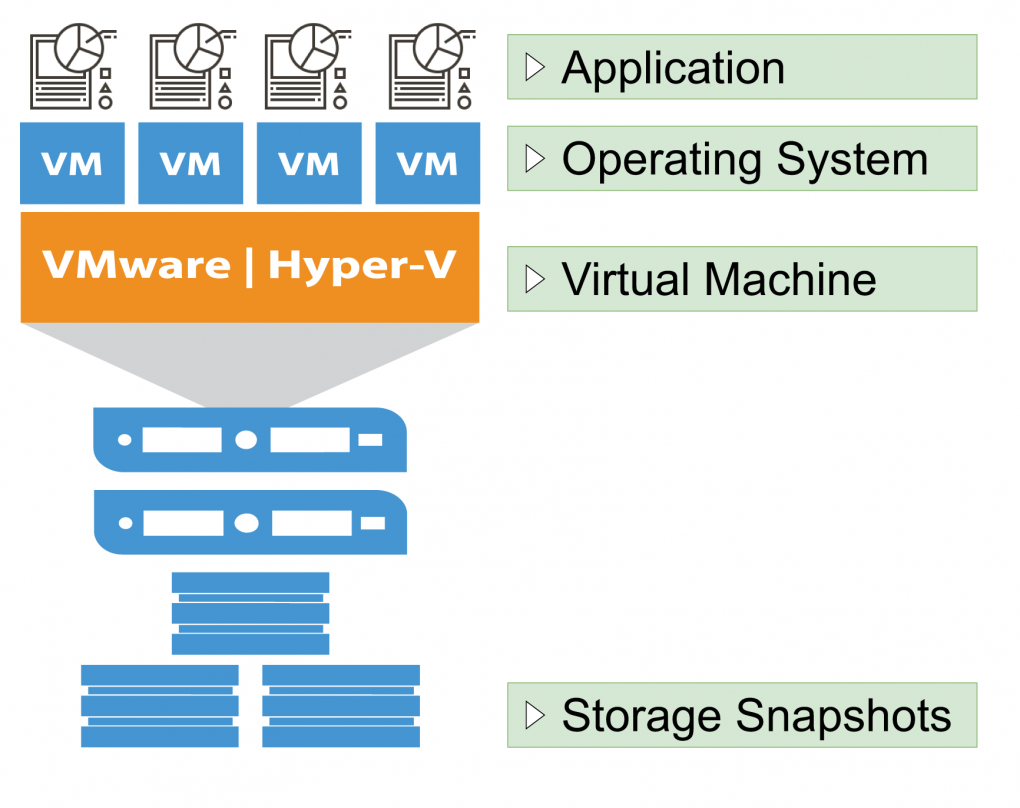

Let’s explore the layers from the top

Application

Application layer backups commonly include backup processes built into a software solution. A common example of this is a point of sale server. Software like Opera, Aloha, Quickbooks, and many other mission-critical server-based software suites will almost always include a setting to activate nightly backups that compress a snapshot of the day’s activity. This compressed data does not include any operating system protection and is only used for restoration of the specific application itself.

- Likelihood you’ll restore data from this backup method: HIGH

- Business recovery speed: MEDIUM (Other backup methods, depending on the issue, may be faster)

- Insurance policy score: MEDIUM (While this backup method usually contains a great deal of mission-critical data, it does not include everything you’ll need to survive a complete catastrophe.

Operating System

Operating system based backups are an easy and comprehensive layer to work with when restoring data during a catastrophe. These backups usually contain a snapshot of system files and resources that won’t otherwise be captured by specific application based backups. Software solutions from Symantec, EaseUS, Acronis, and even built in OS level services allow administrators to specify files, folders, drives, or entire images of an operating system. These backups can be scheduled to be full, incremental, differential, and hybrids or combinations based on the environment and need. While these backup strategies can (and should) additionally include the files that application backups cover, operating system backups should be observed as a separate addition to those scheduled application backups. This is where layering and diversifying backups begin to take shape.

- Likelihood you’ll restore data from this backup method: MEDIUM (You’ll most likely use this method to immediately restore the occasional Microsoft Excel or Word documents gone awry.)

- Business recovery speed: MEDIUM (Other backup methods, depending on the issue, may be faster.)

- Insurance policy score: MEDIUM (This backup method can be very comprehensive, however the likelihood for data corruption is high and should be routinely checked for data consistency and errors.)

Virtual Machine (VM)

Depending on your hypervisor, backup software suites like VEEAM and Nakivo have the ability to dial into the root of a VM host and create various scheduled snapshots of each virtual machine in its entirety. The unique advantage to this backup strategy is not only the ability to migrate and restore VMs to specific hardware during a catastrophe, but they can also dive into each VM and restore “guest OS” level files and folders. This solution, again, should not be used as a replacement to operating system and application layer backups. This is simply an additive process. VM level snapshots can be created quickly and easily before making sizable changes to an operating system or application. If an unrecoverable error occurs, an administrator can simply revert the VM to a previous snapshot almost immediately. Think of it as a giant CTRL + Z action.

- Likelihood you’ll restore data from this backup method: HIGH (This backup method, if tested regularly, is a very flexible and rapid way to undo catastrophe and is often the first direction administrators turn to.)

- Business recovery speed: HIGH (Restoring virtual machines to previous snapshots in a matter of minutes makes this backup method both reliable and speedy.)

- Insurance policy score: HIGH (Assuming proper archival and geo-location based backup strategies are in place for the data this backup method produces, virtual machine backups yield high favor when considering liability and insurance.)

SAN/NAS Block Layer

Storage Area Networks (SAN) and Network Attached Storage (NAS) solutions often include their own backup strategy that place emphasis on the speed of recovery rather than longevity of data. These backups work by cataloging the raw data written to storage disks. This allows administrators to restore entire disks in a matter of moments which may contain many virtual machines at once. Due to the unique nature of block-level snapshots, the system creating these backups has no idea what type of data it is backing up. Any operating system or application level specific data cannot be extracted. This snapshot method can only restore entire drives or target paths, so it is often a last resort for many.

- Likelihood you’ll restore data from this backup method: LOW (Depending on the environment, this is often used for higher level catastrophes.)

- Business recovery speed: HIGH (Restoring target drives using this method is typically very fast depending on the hardware and amount of data.)

- Insurance policy score: MEDIUM-LOW (While extremely important to include in a backup strategy, the data that is written and considered a backup is often not stored for long periods of time and is highly volatile.)

Layers And Locations

It may not be appropriate or even possible to replicate each backup method off-site. Some data and bandwidth restrictions may only allow incremental operating system backups and full VM snapshots to be sent off-site to a secured location. Others may find application backups can be sent off-site and VM snapshots can be stored in another building on the same campus. The goal is to incorporate all layers at the main or mission critical source. From there, careful Disaster Recovery (DR) planning should determine the best route for how to not only store data off-site, but how easy it can be accessed to perform restore actions.

Of course, monitoring these backups for errors using an IT infrastructure monitoring solution is important, but cannot be considered a replacement to routine backup testing.